Explainable AI in Controlling

- Partner:

MLP SE,

University of Ulm - Enddate:

project finished recently, active research still ongoing

Artificial Intelligence (AI) offers significant potential to support data-driven decision-making, particularly in forecasting. However, in practice, the adoption of AI remains limited due to its “black-box” nature. The lack of transparency makes it difficult for practitioners to understand, validate, and trust AI-generated forecasts as a basis for decision-making. Addressing this challenge is critical for enabling the broader use of AI in business contexts. One promising approach lies in Explainable AI (XAI), which aims to make AI outputs interpretable without compromising predictive performance.

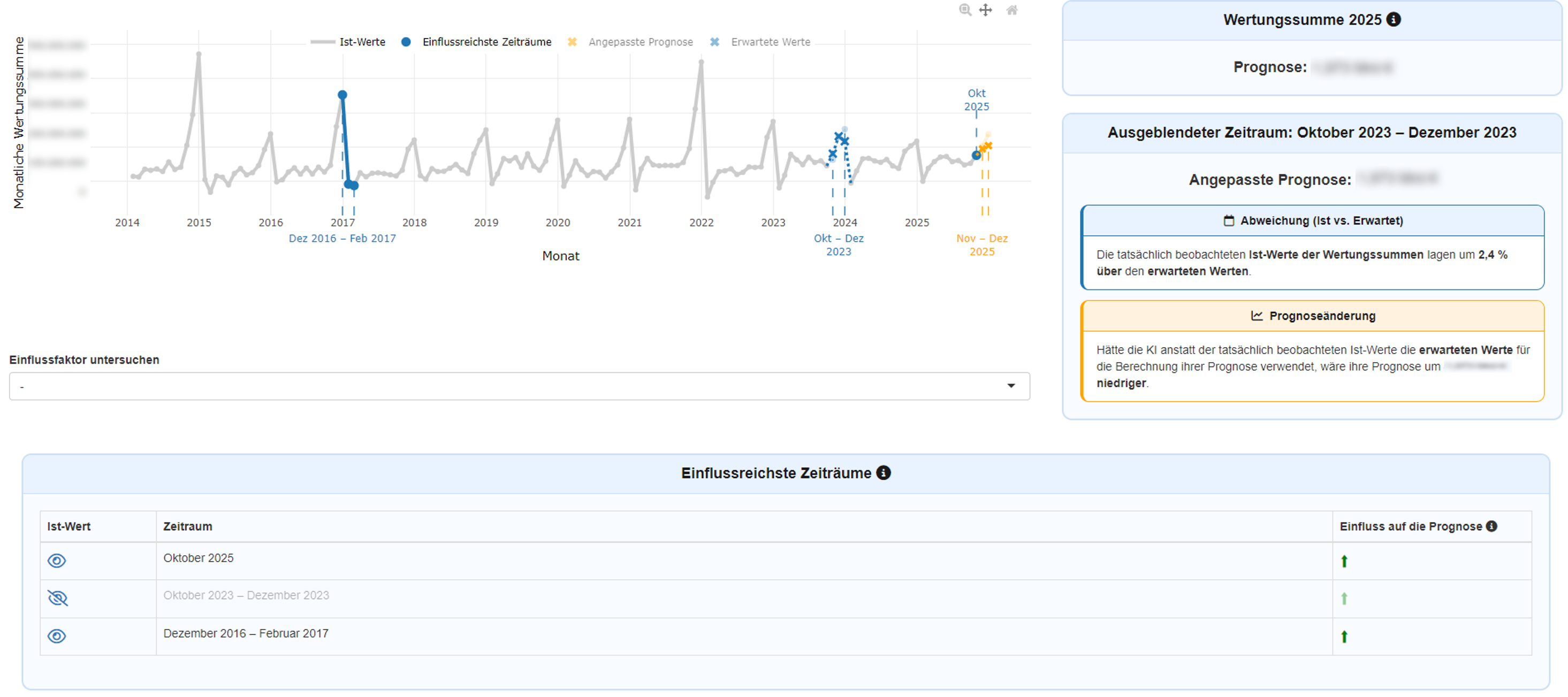

The project focuses on developing novel methods to make AI-generated forecasts – based on time series – more transparent and interpretable for domain experts. In collaboration with MLP SE, we piloted and investigated the novel method under real-world conditions in controlling – a domain where precise forecasts directly inform strategic decisions.

Findings demonstrate that AI-based forecasts can be enhanced with meaningful, automatically generated explanations without reducing their predictive accuracy. These explanations improve users’ ability to understand and assess forecasts, making them more suitable for decision support.

The project makes an important contribution to integrating AI into business decision-making processes, particularly when time series are the underlying data base. By enabling transparent and interpretable forecasts, it lays the foundation for more trustworthy and responsible use of AI in organizations.

Illustration of the application